The era of intelligent machines has dawned, with machine learning taking center stage in various industries. By harnessing the power of data, machine learning applications revolutionize the way we approach problem-solving and make data-driven decisions.

From self-driving cars to personalized recommendation engines, the impact of machine learning is reshaping the world. This essay serves as a comprehensive guide to understanding the fundamentals and intricacies of machine learning, enabling enthusiasts and hobbyists to dive deep into an exciting and rapidly-advancing field.

Introduction to Machine Learning

Table of Contents

- 1 Types of Machine Learning

- 2 Applications of Machine Learning

- 3 Understanding the Significance of Machine Learning

- 4 Getting Started with Machine Learning

- 5 Machine Learning Libraries in Python

- 6 Machine Learning Libraries in R

- 7 Python vs R

- 8 Hands-on Projects

- 9 Handling Missing Data

- 10 Feature Scaling

- 11 Encoding Categorical Variables

- 12 Conclusion

- 13 Linear Regression

- 14 Logistic Regression

- 15 Decision Trees

- 16 Support Vector Machines (SVM)

- 17 Convolutional neural networks (CNNs)

- 18 Recurrent neural networks (RNNs)

Machine learning is a rapidly growing field within artificial intelligence that has garnered significant attention in recent years due to its ability to enable computers to learn and improve their performance based on the data they consume.

The history of machine learning dates back to mid-20th century, beginning with simple algorithms to teach computers how to play games like checkers and recognize patterns in data.

As computers grew more powerful and data became more abundant, machine learning evolved into a complex discipline and gave birth to a multitude of algorithms and models that are used today in various applications.

Types of Machine Learning

There are three main types of machine learning: supervised learning, unsupervised learning, and reinforcement learning.

Supervised learning involves training a model using labeled data, which contains both input features and corresponding output labels.

In contrast, unsupervised learning involves working with data that is not labeled.

Reinforcement learning is a unique type of machine learning that revolves around the idea of an agent learning to perform actions in an environment to maximize a cumulative reward signal.

Applications of Machine Learning

One appealing aspect of machine learning is its versatility across a wide range of applications. For instance, machine learning algorithms are employed in fields like natural language processing, computer vision, and recommendation systems, among others.

In natural language processing, algorithms such as RNNs (recurrent neural networks) and transformers are utilized to understand and generate human-like language.

Similarly, computer vision applications use convolutional neural networks (CNNs) to analyze and interpret images, enabling tasks such as image classification, object detection, and facial recognition.

Meanwhile, in recommendation systems, collaborative filtering and matrix factorization techniques are commonly employed to provide users with personalized suggestions based on their preferences and behaviors.

Understanding the Significance of Machine Learning

Machine learning plays a crucial role across various industries and sectors, such as healthcare, finance, marketing, and transportation. Its widespread use and growing importance make it essential for enthusiasts and professionals alike to have a thorough understanding of machine learning fundamentals, diverse applications, and key concepts.

As technology continues to advance, machine learning techniques become increasingly sophisticated, further amplifying their significance in our data-driven world. Stay informed and competitive by learning the basics and delving into the myriad applications of machine learning.

Programming Languages for Machine Learning

Getting Started with Machine Learning

To become proficient in machine learning applications, it’s important to build a strong foundation in the programming languages often used within the field. Python and R stand out as the most popular choices among practitioners because they offer extensive libraries and frameworks designed to optimize the development process.

By mastering these languages, you’ll have access to powerful tools for data manipulation and analysis, as well as the ability to work with various machine learning algorithms in an efficient and user-friendly manner. So, begin your journey by familiarizing yourself with Python and R, setting the stage for success in the world of machine learning.

Machine Learning Libraries in Python

When working with Python, you can leverage several machine learning libraries, such as TensorFlow, scikit-learn, and Keras. TensorFlow is an open-source library developed by Google that supports various machine learning and deep learning applications. It has gained popularity due to its flexible architecture, allowing you to easily deploy computations across multiple platforms, including CPUs, GPUs, and TPUs. Scikit-learn is another widely used library for machine learning in Python, offering a comprehensive range of tools for classification, regression, clustering, dimensionality reduction, and model selection tasks. Keras, on the other hand, is a high-level neural networks library built on top of TensorFlow, enabling you to experiment with deep learning models using just a few lines of code.

Machine Learning Libraries in R

If you prefer working with R, you can take advantage of powerful libraries like caret, randomForest, and xgboost for machine learning tasks. Caret (Classification And Regression Training) provides a unified interface for hundreds of predictive modeling algorithms, allowing you to easily preprocess data, tune model parameters, and compare the performance of different algorithms. RandomForest is a widely used library for classification and regression tasks, featuring an ensemble learning method based on constructing multiple decision trees during training. Xgboost is an optimized distributed gradient boosting library designed to be highly efficient, flexible, and portable, offering robust support for both regression and classification tasks.

Python vs R

While both Python and R offer extensive functionality for machine learning applications, each programming language has its strengths and weaknesses, depending on your specific requirements and objectives. In general, Python has gained widespread adoption due to its readability, ease of use, and comprehensive library support for various machine learning tasks.

R is known for its rich ecosystem of statistical and data visualization tools, making it ideally suited for exploratory data analysis and statistical modeling. Regardless of your choice between Python and R, becoming proficient in either or both languages will undoubtedly bolster your skills in machine learning applications and pave the way for successful implementation of machine learning algorithms.

Hands-on Projects

To become skilled in machine learning applications, it is crucial to immerse yourself in hands-on projects and practice with real-world scenarios.

By working on these projects, you’ll not only reinforce your understanding of key concepts and methodologies, but also gain valuable experience in solving complex problems using innovative machine learning techniques.

As you develop expertise in programming languages like Python and R, along with practical knowledge of libraries and frameworks such as TensorFlow, scikit-learn, and Keras, you’ll be well-prepared to succeed in the dynamic field of machine learning applications.

Data Preprocessing

As you dive into hands-on projects, you will discover the importance of data preprocessing and cleaning as critical steps in the machine learning pipeline. The quality and structure of the input data largely determine the performance of the resulting models.

Data preprocessing involves transforming raw data into a suitable format for analysis, often including techniques such as handling missing data, scaling features, and encoding categorical variables. By mastering these techniques, you’ll ensure that your data is consistent and well-structured, allowing machine learning algorithms to converge faster and produce more accurate predictions. This practical knowledge will further enhance your skills and proficiency in machine learning applications.

Handling Missing Data

Handling missing data is one of the most common challenges faced during the data preprocessing stage.

Different methods such as imputation, deletion, and interpolation can be employed to deal with missing data based on the nature and extent of the missing values.

Imputation techniques replace missing values with estimates, such as the mean or median value, whereas deletion methods involve removing instances with missing values altogether.

Interpolation, on the other hand, estimates missing values using trends or patterns in the neighboring data points.

Feature Scaling

Another essential step in data preprocessing is feature scaling, which standardizes the range of values for each feature in the dataset.

Two common scaling techniques are normalization, where the data is transformed to lie between a specified range, usually [0,1], and standardization, which centers the data around the mean and scales it to have unit variance.

Feature scaling ensures that each input feature carries equal weight in the final model and prevents the influence of one feature from dominating others.

Encoding Categorical Variables

Encoding categorical variables is also an important step in data preprocessing, as most machine learning algorithms expect numerical inputs.

Categorical variables, which represent discrete categories, can be nominal or ordinal.

Techniques such as label encoding and one-hot encoding can be used to convert categorical data into numerical values.

Label encoding assigns a unique integer to each category, whereas one-hot encoding creates binary features for each category, with a one indicating the presence of a category and a zero for its absence.

Conclusion

Data preprocessing is an essential step in the machine learning process, playing a critical role in ensuring the quality and consistency of input data for accurate and robust machine learning models.

By addressing issues such as missing data, scaling features, and encoding categorical variables, enthusiasts and hobbyists can significantly improve their skill set in machine learning applications and create successful projects.

Supervised Learning

Among the various aspects of machine learning applications, supervised learning stands out as a fundamental component, enabling the understanding and implementation of different predictive models. Supervised learning involves training a model using labeled data, which contains not only the input features but also the corresponding output labels.

This approach allows the model to learn the relationship between the input and output, thereby enabling it to generate predictions on new and unseen data. Some of the most widely used algorithms in supervised learning include linear regression, logistic regression, decision trees, and support vector machines.

Linear Regression

Linear regression is a popular supervised learning algorithm used for predicting continuous output values. It establishes a linear relationship between the input features and output labels, making it particularly useful for modeling data trends and forecasting.

Logistic Regression

Logistic regression focuses on binary classification tasks, where the goal is to predict one of the two possible classes for a given input. It employs a logistic function that transforms linear regression outputs into probabilities, providing a clear boundary between the two classes.

Decision Trees

Decision trees are another fundamental technique in supervised learning, utilized for both regression and classification tasks. These models involve constructing a tree-like representation where each internal node defines a decision based on feature values, and the leaf nodes represent the output labels.

Support Vector Machines (SVM)

Support vector machines (SVM) serve as an essential tool for classification and regression tasks in supervised learning. By utilizing kernel functions, SVMs can transform input data into a higher-dimensional space, enabling efficient separation of data points from different classes. The algorithm seeks to determine the optimal hyperplane that maximizes the margin between classes while minimizing classification errors, resulting in a robust and accurate approach to predictive modeling.

Grasping the principles of supervised learning and becoming acquainted with its prevalent algorithms lays the groundwork for successfully implementing machine learning in various applications. By mastering techniques such as linear regression, logistic regression, decision trees, and support vector machines, enthusiasts and hobbyists acquire the skills necessary to create high-performing predictive models, advancing their expertise in the ever-expanding realm of machine learning applications.

Unsupervised Learning

Delving into unsupervised learning is also imperative for machine learning applications, as it enables algorithms to interpret complex and unlabeled datasets. By seamlessly combining both supervised and unsupervised learning approaches, individuals can further enhance their ability to develop sophisticated and efficient machine learning models that can tackle a multitude of real-world problems and challenges.

Compared to supervised learning, where labeled data guides the learning process, unsupervised learning involves finding hidden patterns and structures within datasets without any predefined outputs.

Among the techniques employed in unsupervised learning are clustering algorithms like k-means and hierarchical clustering.

Clustering algorithms play a vital role in many machine learning applications, as they can segment and categorize datasets into groups based on similarity or distance metrics.

The k-means algorithm, for instance, is widely used for its simplicity and efficiency. K-means assigns data points to a specified number of clusters by iteratively updating their centroids to minimize the sum of squared distances within each group.

On the other hand, hierarchical clustering involves building a tree-like structure, where similar data points progressively merge into larger clusters.

Dimensionality reduction techniques, such as principal component analysis (PCA) and t-distributed stochastic neighbor embedding (t-SNE), are also essential unsupervised learning tools.

These methods help simplify high-dimensional datasets by transforming them into a lower-dimensional space while preserving most of the original data’s structure and information.

PCA, one of the most widely used dimensionality reduction techniques, achieves its goal by identifying directions, or principal components, in which the variance of the data is maximized.

In contrast, t-SNE is a nonlinear dimensionality reduction method, which is particularly effective at preserving the local structure of the data.

Understanding how the various unsupervised learning techniques, such as clustering algorithms and dimensionality reduction methods like PCA and t-SNE, can be applied to machine learning applications is crucial for making the most of the information hidden within complex, unlabeled datasets.

Incorporating these methods into machine learning projects not only enhances the model’s ability to make sense of the data but also affords more efficient processing, better visualization, and ultimately improved performance.

As an enthusiast or hobbyist, having a solid foundation in unsupervised learning techniques will equip you with the skills needed to tackle a wide range of machine learning challenges in developing impactful applications.

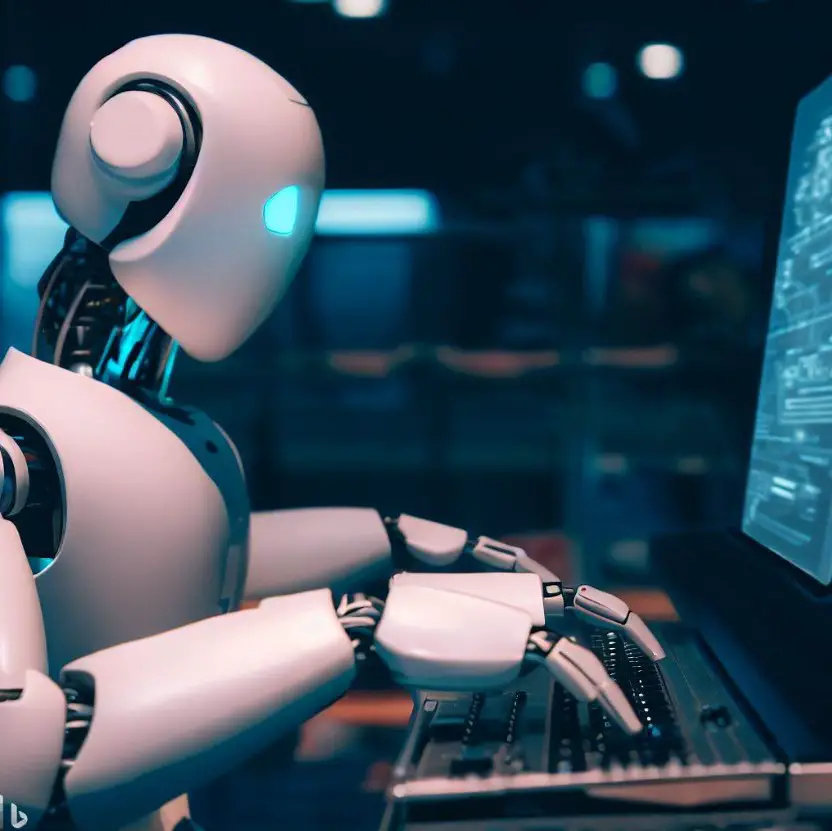

Deep Learning

In particular, deep learning, an advanced subfield of machine learning, has gained significant traction and attention due to its incredible effectiveness in handling large and complex data sets. A key component of deep learning is the utilization of neural networks, which are designed to imitate the human brain’s ability to process information, allowing for more seamless integration of machine learning applications.

Neural networks are particularly effective in addressing challenges related to computer vision and natural language processing, two complex areas that require advanced pattern recognition and data interpretation.

Convolutional neural networks (CNNs)

are a specialized type of neural network often employed specifically for computer vision applications. By using a series of layers to scan and process input images, CNNs can identify and differentiate between various image features. This ability to ‘see’ and interpret complex visual data has led to major breakthroughs in fields such as image recognition, autonomous vehicles, and facial recognition technology.

Additionally, CNNs can be applied to analyze non-image data through time-series approaches and other adaptable machine learning techniques.

Recurrent neural networks (RNNs)

are a popular choice when it comes to natural language processing (NLP). The primary advantage of RNNs is their ability to handle and process sequences of data, making them particularly suitable for understanding and predicting patterns in textual information. RNNs have been widely used in applications such as text classification, sentiment analysis, machine translation, and summarization.

Long short-term memory (LSTM) networks

are a particularly powerful and advanced type of RNNs. They incorporate memory cells that allow the network to learn and retain long-range sequences, addressing limitations of traditional RNNs that struggle with sequential data that stretches over long time periods or series of words. This has improved the capabilities of machine learning systems in areas like text generation, speech recognition, and detecting trends in large time-series datasets.

In conclusion, the world of deep learning has made tremendous strides due to the development and application of various types of neural networks, such as CNNs, RNNs, and LSTMs.

These technologies have not only expanded the possibilities of machine learning applications, but have also demonstrated how human-like learning and processing can be replicated by machines.

As enthusiasts and hobbyists aim to hone their skills in machine learning, a deep understanding of these neural networks and their applications is essential for unlocking new potential innovations and overcoming increasingly complex challenges.

Reinforcement Learning

Reinforcement learning, a subset of machine learning, revolves around developing algorithms that enable agents to make better decisions in a given environment through interaction and learning from outcomes. This is accomplished using foundational structures such as Markov decision processes (MDP).

Q-learning, a popular value-based reinforcement learning algorithm, is designed to find the optimal action-value function. In contrast, deep Q-networks (DQNs) enhance Q-learning by introducing powerful function approximators, such as deep neural networks, to estimate the action-value function.

Various applications can benefit from reinforcement learning algorithms, including Q-learning and deep Q-networks, for optimizing decision-making and control processes. Implementing reinforcement learning in machine learning applications allows for the development of self-improving systems that learn from experience and dynamically adapt their behavior.

These continuously evolving systems can perform better over time and discover innovative solutions to previously unsolved problems.

Model Evaluation and Hyperparameter Tuning

Model evaluation and hyperparameter tuning are essential processes in the development of machine learning applications, such as evaluating a model’s performance by comparing its predictions against actual outcomes and adjusting various parameters to enhance accuracy. Both data scientists and hobbyists should prioritize model evaluation and hyperparameter tuning, as they ensure that machine learning applications are accurate, reliable, and efficient.

By successfully assessing and tuning machine learning models, enthusiasts and professionals alike can build dependable reinforcement learning systems that continuously improve through experience and adapt to new information, ultimately providing a higher level of performance in real-world applications.

Cross-validation is a widely-used technique for assessing the performance of machine learning models, especially in applications that require a high degree of precision. Cross-validation involves dividing the dataset into multiple subsets or “folds” and training the model on different combinations of these folds. This approach helps to identify overfitting, wherein a model performs exceptionally well on the training data but poorly on new, unseen data. By training and testing a model on different subsets of the dataset, enthusiasts can gain valuable insights into its generalization capabilities, which is a crucial aspect of model evaluation.

Performance metrics are essential for quantifying a machine learning model’s effectiveness and determining which aspects need improvement. Commonly-used metrics include accuracy, F1 score, precision, recall, and receiver operating characteristic curve (ROC AUC) for classification problems, and mean absolute error (MAE), mean squared error (MSE), and R2 score for regression problems. By selecting appropriate performance metrics, enthusiasts can effectively monitor the performance of their machine learning applications and make informed decisions regarding model selection and enhancement strategies.

Hyperparameter tuning is a critical step in machine learning applications, as it enables practitioners to optimize their model’s performance by adjusting various parameters. Grid search, random search, and Bayesian optimization are popular techniques for finding the optimal set of hyperparameters.

Grid search involves exhaustively testing a predefined set of hyperparameter values, while random search tests randomly-selected combinations of hyperparameters within a specific range. Bayesian optimization, on the other hand, employs a probabilistic model to guide the search for optimal hyperparameters, enabling a more efficient exploration of the search space.

Model evaluation and hyperparameter tuning are crucial aspects of implementing successful machine learning applications, and it’s important for enthusiasts and practitioners to understand these concepts. Effective techniques like cross-validation and performance metrics enable developers to properly measure the efficacy of their models.

Additionally, methods such as grid search, random search, and Bayesian optimization aid in optimizing model performance. By mastering these tactics, individuals can enhance the accuracy and reliability of their machine learning creations.

Machine Learning in the Real World

Over the years, machine learning has gained significant traction and has been integrated into numerous real-world applications that affect our everyday lives. Recommendation systems, which are prevalent on platforms like Amazon, Netflix, and Spotify, are a prime example of this emerging technology.

These systems leverage machine learning algorithms to examine user behavior, preferences, and browsing history, generating tailored suggestions for products, movies, and songs. Consequently, users enjoy a more personalized experience, leading to increased user engagement and sales for businesses.

Another critical application of machine learning can be observed in fraud detection, particularly in the finance and banking industries. Machine learning algorithms are employed to analyze vast amounts of transactional data to identify patterns and anomalies that could indicate fraudulent activity.

By continuously learning from new data, these algorithms become more adept at detecting potential threats, allowing organizations to take preemptive measures and minimize damage caused by fraudulent transactions. This has considerably improved the security and reliability of online banking and financial services.

Sentiment analysis is another practical application of machine learning that interprets and extracts subjective information from text data, like tweets, comments, or reviews. Companies use this technique to gauge public opinion and sentiment regarding their products, services, or even competitors.

Machine learning algorithms, through natural language processing, can classify vast amounts of text data as positive, negative, or neutral, providing valuable insights for marketing strategies, customer service improvements, and overall brand perception.

While machine learning offers numerous benefits, it is essential to acknowledge the ethical and societal implications surrounding its use. As more organizations rely on machine learning to automate decision-making processes, the potential for biased and discriminatory outcomes based on flawed data or algorithms arises. The fairness, transparency, and accountability of these systems must be considered, as they could inadvertently perpetuate existing inequalities and prejudices.

Lastly, the growing presence of machine learning in fields like healthcare also raises questions about data privacy and security. Medical professionals and researchers utilize machine learning algorithms to analyze patient data, develop new treatment plans, and diagnose illnesses.

However, this reliance on vast amounts of personal health information exposes potential vulnerabilities to data breaches or misuse. It is critical to establish stringent data protection measures and educate stakeholders about the ethical responsibilities surrounding machine learning to ensure its safe and responsible deployment in all applications.

As we continue to explore the vast realm of machine learning, it becomes apparent that its potential is immense. Armed with knowledge of various algorithms, programming languages, and evaluation techniques, enthusiasts and hobbyists can create wonders in the field.

With practical applications spanning across industries, machine learning continues to break new ground, changing the way we live, work, and interact. An ongoing journey of discovery, the pursuit of machine learning expertise promises not only personal growth but also the opportunity to contribute to the global progress driven by this transformative technology.

I’m Dave, a passionate advocate and follower of all things AI. I am captivated by the marvels of artificial intelligence and how it continues to revolutionize our world every single day.

My fascination extends across the entire AI spectrum, but I have a special place in my heart for AgentGPT and AutoGPT. I am consistently amazed by the power and versatility of these tools, and I believe they hold the key to transforming how we interact with information and each other.

As I continue my journey in the vast world of AI, I look forward to exploring the ever-evolving capabilities of these technologies and sharing my insights and learnings with all of you. So let’s dive deep into the realm of AI together, and discover the limitless possibilities it offers!

Interests: Artificial Intelligence, AgentGPT, AutoGPT, Machine Learning, Natural Language Processing, Deep Learning, Conversational AI.